Company News

Aug 8, 2018

Press Release: Robots to learn more human-like behaviour without a real-world set-up

The Shadow Robot Company, experts at grasping and manipulation for robotic hands, have teamed up with OpenAI, a San Francisco-based AI research company, for the second time to prove that agents trained in simulation can effectively perform human-like tasks without real-world replication of training.

Shadow’s Dexterous Hand manipulates physical objects with unprecedented human-like dexterity under the command of OpenAI’s robotic system called Dactyl. Dactyl is trained entirely in simulation using the MuJoCo physics engine. This simulation is only a rough approximation of the real robotic set-up but through domain randomization (altering aspects of the environment like its physics and visual appearance), Dactyl can digitally learn how to solve real-world tasks without needing accurate modelling of the real-world or demonstrations by humans.

While Dactyl doesn’t need humans to input knowledge to teach it, the way in which it learns is certainly human-like. As humans learn from failure and through trial and error, Dactyl uses reinforcement learning to become smarter. After learning the techniques – in this case, reorienting several kinds of objects – Dactyl can then transfer its knowledge to govern the Shadow Hand to perform goal-orientated tasks effectively, without the fuss of fine-tuning.

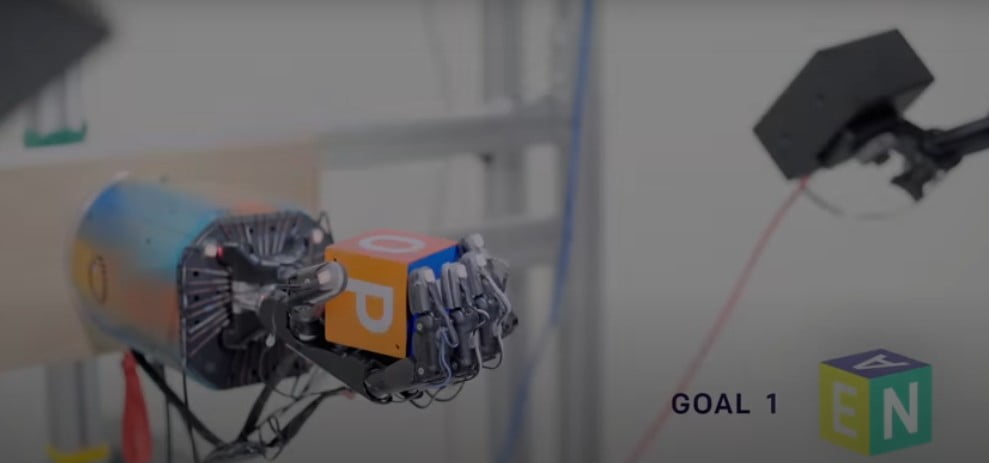

The Shadow Hand is designed to mimic the human hand, from its overall size being that of a human male to a total of 24 joints, to having the range of movement of the human hand. Using OpenAI’s Dactyl software it is seen to grasp and manipulate objects with state-of-the-art precision using grasps observed in humans, such as tripod (a grip that uses the thumb, index finger, and middle finger), prismatic (a grip in which the thumb and finger oppose each other), and tip pinch grip. It also picked up Dactyl’s learnings to twist and toss the object into the desired position.

Examples of dexterous manipulation behaviours autonomously learned by Dactyl.

After you place an object – such as a cube or a block – in the palm of the Shadow Dexterous Hand, you ask Dactyl to move it around; for example, to rotate the cube to put a new face on top. Dactyl’s network is told the coordinates of the fingertips and uses images from three regular cameras positioned around Shadow’s Hand. OpenAI tested how whether Dactyl could achieve 50 rotations before it dropped the object or failed to find a movement. The idea is to provide the system with a variety of experiences rather than maximizing realism.